Beyond Traditional Medical References: The Evolution of Clinical Decision Support

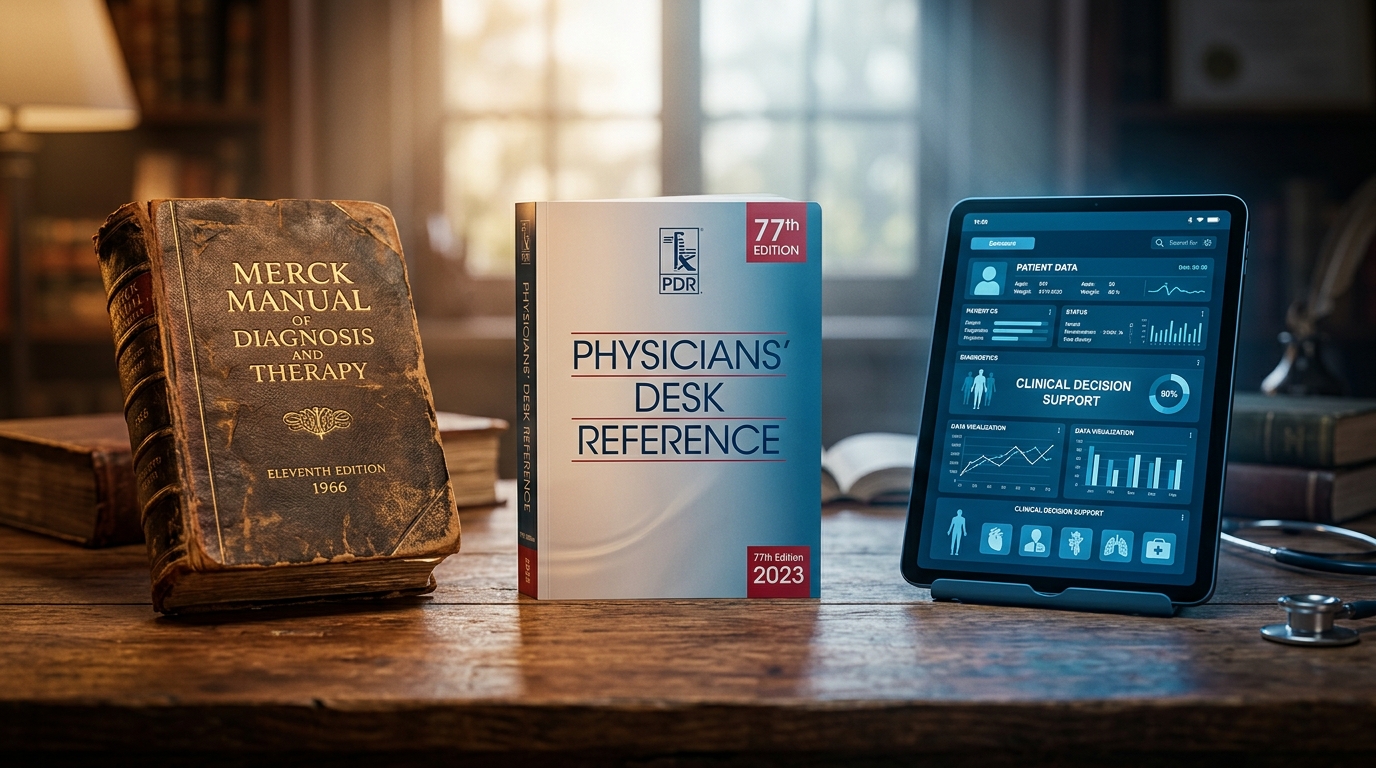

The First Generation: Textbooks and Expert Knowledge

For most of modern medicine's history, clinical knowledge was stored in textbooks. Harrison's Principles of Internal Medicine, first published in 1950, became the standard reference for generations of internists. Cecil Textbook of Medicine, Sabiston's Textbook of Surgery, Nelson Textbook of Pediatrics — each specialty had its authoritative tome, updated every three to five years, written by recognized experts, and physically present on the shelf of every resident's call room.

The textbook model had clear strengths. Content was written by domain experts. Editorial review was rigorous. The synthesis was comprehensive — a single chapter on heart failure might integrate physiology, pharmacology, and clinical trials into a coherent narrative. For a physician in 1990 encountering a condition they had not seen since residency, Harrison's was often the best available resource.

But textbooks had a fundamental limitation: they were static. A textbook published in January 2020 could not contain the results of a pivotal trial published in March 2020. The median lag between a major trial publication and its incorporation into a major textbook edition was estimated at 5.5 years by Antman et al. in a frequently cited analysis in JAMA (1992). For rapidly evolving fields — oncology, infectious disease, cardiology — this meant that the textbook on the shelf might be actively contradicted by current evidence.

The textbook model also assumed a physician with time. Reading a 40-page chapter on heart failure to answer a specific question about SGLT2 inhibitor dosing in a patient with CKD was not efficient. The knowledge was there, somewhere in those pages, but finding it in the middle of a busy clinic was impractical.

The Second Generation: Expert-Authored Digital References

UpToDate, founded in 1992 by Burton Rose, a nephrologist at Harvard, was built on a simple insight: physicians needed current, evidence-based answers to specific clinical questions, delivered faster than a textbook could provide. The model was revolutionary for its time — expert authors, typically academic physicians, wrote and continuously updated topic reviews that synthesized the current evidence into practical clinical guidance.

By 2025, UpToDate had become the dominant clinical reference tool in medicine, with over 2 million physician subscribers worldwide. Its content covers more than 12,000 clinical topics, written by over 7,400 physician authors. Multiple studies have demonstrated that UpToDate use is associated with improved clinical outcomes: a 2012 study by Isaac et al. in the Journal of Hospital Medicine found that hospitals with UpToDate subscriptions had lower mortality rates and shorter lengths of stay, with a 0.5% absolute reduction in in-hospital mortality.

The UpToDate model solved the currency problem that textbooks could not. When DAPA-HF was published in 2019, the relevant UpToDate topics were updated within weeks. When COVID-19 treatment evidence evolved rapidly in 2020-2021, UpToDate's editorial team published updates sometimes daily. The expert-authored, continuously-updated model was a genuine advance.

But this second generation had its own limitations:

- Single-specialty framing. UpToDate topics are organized by specialty. The heart failure topic lives in cardiology. The CKD topic lives in nephrology. The diabetes management topic lives in endocrinology. For a physician managing a patient with all three conditions simultaneously, the information is fragmented across multiple topic pages with no cross-synthesis. The physician must mentally integrate recommendations from three separate topics — and identify where those recommendations conflict.

- Generic recommendations. UpToDate provides evidence-based guidance for the average patient with a given condition. It does not easily handle patient-specific nuances: "What does the evidence say about empagliflozin in a 72-year-old with HFpEF, eGFR 34, on apixaban for AF, with prior GI bleeding?" This question requires subgroup data from specific trials, interaction analysis, and risk-benefit weighing that UpToDate's topic-based format is not designed to provide.

- Access barriers. UpToDate subscriptions cost $559/year for individual physicians (2026 pricing). Institutional licenses are significantly more expensive. As we discuss in our review of free clinical tools available to physicians, this cost creates a real access disparity, particularly for physicians in smaller practices or resource-limited settings.

- Search limitations. UpToDate's search function works best when you know what topic you are looking for. It is less effective for complex, multi-system clinical questions that do not map neatly to a single topic page.

From Expert-Authored Content to Evidence Synthesis

The third generation of clinical decision support emerged from a recognition that clinical questions were outgrowing the topic-based model. Physicians were not asking "Tell me about heart failure" — they were asking "Given this specific patient with these specific comorbidities, what does the evidence support?" The shift was from reference lookup to evidence synthesis.

Several platforms emerged in this space. DynaMed offered a similar model to UpToDate but with a more systematic evidence-grading approach. Isabel Healthcare provided differential diagnosis support. VisualDx focused on dermatological decision support with image-based diagnosis. Each addressed specific limitations of the general-reference model.

The more significant shift was the entry of search-based tools that attempted to synthesize evidence directly from the literature rather than from pre-written expert summaries. These tools take a clinical question, search the medical literature, and attempt to construct an evidence-based answer in real time. The promise was compelling: instead of relying on whether an expert author had recently updated a topic page, the tool would go directly to the source literature.

The limitation of search-based approaches became apparent quickly. Searching the literature and synthesizing evidence are fundamentally different tasks. A search engine can find relevant papers; converting those papers into a coherent clinical recommendation requires understanding study design, evaluating quality, weighing conflicting evidence, and applying the findings to a specific clinical context. Early search-based tools often returned a list of papers rather than a synthesized answer, shifting the synthesis burden back to the physician.

The Limitations of Search-Based Clinical Tools

Search-based clinical tools — including general-purpose language models applied to medical questions — face several structural challenges that limit their clinical utility:

- The citation reliability problem. General-purpose language models generate plausible-sounding citations that may not correspond to real papers. As documented in our analysis of citation hallucination in clinical tools, fabrication rates as high as 47% have been reported (Bhattacharyya et al., Cureus, 2023). This is not a minor accuracy issue — it is a fundamental trust problem that undermines the entire evidence-based framework.

- Single-pass answers. Most search-based tools answer a question once, from a single perspective. They do not trace the implications of a finding across related domains. If a study on drug A has implications for the management of condition B in the same patient, a search-based tool will typically miss that connection unless the physician specifically asks about it.

- No evidence hierarchy. Search tools that return papers without grading the evidence leave the physician to determine whether a case report and a multi-center RCT should carry equal weight. The synthesis step — evaluating, weighing, and integrating — remains a manual task.

- Recency bias. Tools that search the literature in real time may overweight recent publications and underweight established evidence. A 2024 single-center study does not supersede a 2019 landmark RCT, but a search algorithm may rank the newer paper higher simply because it is more recent.

Cross-System Reasoning: The Next Step

The emerging generation of clinical decision support tools is defined by a capability that previous generations lacked: the ability to reason across clinical domains simultaneously. Rather than answering a cardiology question from cardiology evidence and a nephrology question from nephrology evidence, these tools are designed to identify where evidence from one domain modifies the answer in another.

Consider a clinical question about anticoagulation in a patient with atrial fibrillation, stage 3b CKD, and a history of GI bleeding. The cardiology evidence supports anticoagulation (CHA2DS2-VASc-based risk reduction). The gastroenterology evidence flags elevated bleeding risk. The nephrology evidence modifies the pharmacokinetics of the anticoagulant options (renal clearance varies by agent). A tool that reasons across these domains simultaneously can surface the specific intersection of evidence: which anticoagulant has the most favorable risk-benefit profile for this patient's specific combination of conditions?

This is not a hypothetical capability. It requires three specific technical features: the ability to search evidence across specialty boundaries, the ability to identify where evidence from one domain is relevant to a question in another, and the ability to synthesize those cross-domain findings into a coherent, citation-backed recommendation. It also requires that every citation in the synthesized answer be verified — because cross-system reasoning with fabricated citations is worse than no reasoning at all.

What Physicians Should Expect from Clinical Decision Support in 2026

The evolution from textbooks to expert-authored references to search-based tools to clinical intelligence platforms reflects a consistent trajectory: each generation has moved closer to answering the physician's actual question rather than providing information that the physician must then synthesize themselves.

In 2026, a clinical decision support tool should meet several baseline expectations:

- Patient-specific evidence. The tool should handle questions that include specific comorbidities, medications, and lab values — not just generic disease topics. The evidence for a 45-year-old with new-onset hypertension and no comorbidities is different from the evidence for a 78-year-old with hypertension, CKD, heart failure, and diabetes.

- Verified citations. Every citation should be confirmed against an indexed database before it reaches the physician. This is non-negotiable in 2026.

- Cross-specialty synthesis. When a clinical question spans multiple specialties — and most complex clinical questions do — the tool should synthesize evidence across those specialties automatically, surfacing conflicts and connections rather than siloing information by domain.

- Accessible pricing. Cost should not be a barrier to accessing the best available clinical evidence. The era of $500/year subscriptions as the only path to synthesized clinical evidence is ending, as the bench-to-bedside gap cannot be closed if the tools designed to close it are inaccessible to a significant fraction of physicians.

Ailva was built as a clinical intelligence platform that meets these expectations: verified citations, cross-system reasoning, patient-specific evidence synthesis, and free access for all NPI-verified physicians. It represents the next step in the evolution described here — not a replacement for the work that UpToDate and other second-generation tools pioneered, but a continuation of the trajectory toward answering the specific question, for the specific patient, with evidence you can trust. See how it works.

Frequently Asked Questions

How long does it take for trial evidence to reach medical textbooks?

Does UpToDate use reduce hospital mortality?

How much does an UpToDate subscription cost for individual physicians?

What are the main limitations of UpToDate's topic-based model?

What is the citation fabrication rate in search-based clinical tools?

What should physicians expect from clinical decision support in 2026?

Explore This Topic in Ailva

Ailva is a free clinical intelligence platform for NPI-verified US physicians. Get evidence-based answers with verified citations from 16M+ indexed papers — plus free CME credits.

Founder of Ailva.ai | Former Director of Research and Author of 200+ Medically Reviewed Articles | Editor-in-Chief of EudaLife Magazine